Artificial intelligence is no longer a future concern for cybersecurity. It is already reshaping how cyberattacks are conducted and how organizations defend themselves. Security agencies, technology companies, and threat-intelligence teams are now documenting real-world cases where AI is actively influencing cyber operations on both sides of the security equation.

The shift is happening faster than many expected. Generative AI systems can produce convincing phishing emails, assist with malware development, and automate reconnaissance tasks. At the same time, security teams are deploying AI tools to detect threats, analyze enormous volumes of data, and respond to incidents faster than traditional methods allow.

This means cybersecurity is entering a new phase. Instead of a purely human contest between attackers and defenders, it is increasingly becoming a contest between intelligent systems working alongside human operators. Understanding this change requires examining how AI is already influencing cyber offense, cyber defense, and the broader security landscape.

AI Is Already Strengthening Cyberattacks

One of the most visible impacts of artificial intelligence in cybersecurity is the way it enhances the capabilities of cybercriminals and threat actors. Security analysts consistently report that attackers are using AI tools to accelerate multiple stages of cyber operations, from reconnaissance to exploitation and post-compromise activities.

Government cyber agencies have warned that AI will likely increase both the scale and effectiveness of cyberattacks. The United Kingdom’s National Cyber Security Centre (NCSC), for example, states that AI is expected to enhance threat actors’ ability to conduct cyber intrusions and social engineering campaigns, especially by lowering the skill level required to execute sophisticated attacks.

This reduction in technical barriers is one of the most important consequences of AI adoption in cybercrime. Tasks that previously required skilled developers or experienced penetration testers can now be partially automated using AI-assisted tools.

AI-Generated Phishing and Social Engineering

Phishing remains one of the most common entry points for cyberattacks, and AI has dramatically improved the effectiveness of these campaigns. Generative AI systems can produce highly convincing messages in multiple languages and mimic natural communication styles. This eliminates many of the spelling and grammatical errors that once made phishing attempts easier to detect.

Security researchers have observed that attackers are already using generative AI to craft targeted phishing emails, conduct multilingual scams, and tailor messages using publicly available information about victims. Microsoft’s threat-intelligence analysis reports that threat actors are leveraging AI for tasks such as generating phishing lures, summarizing stolen data, and researching targets during cyber operations.

Because AI tools can process large amounts of public data, attackers can also personalize their messages more effectively. Instead of sending generic phishing emails to thousands of users, cybercriminals can automatically create tailored messages that reference a victim’s employer, job role, or recent activities. This significantly increases the likelihood that the victim will interact with the malicious message.

The World Economic Forum has similarly warned that generative AI is enabling new forms of social engineering that combine realistic emails, deepfake media, and convincing documents designed to deceive both individuals and organizations.

These capabilities make phishing attacks more scalable and more convincing at the same time.

AI-Assisted Malware Development and Reconnaissance

Artificial intelligence is also changing how malware is developed and deployed. While AI does not yet replace skilled attackers, it can assist them by generating code snippets, debugging malicious scripts, or identifying potential vulnerabilities in target systems.

Threat-intelligence teams have observed attackers using AI to research software vulnerabilities, generate attack scripts, and support reconnaissance activities during cyber operations. Google’s Threat Intelligence Group reports that threat actors are already experimenting with AI for vulnerability research, coding assistance, and post-compromise analysis of compromised systems.

This type of assistance dramatically reduces the time needed to develop or modify attack tools. Instead of writing code from scratch, attackers can instruct AI systems to generate scripts or modify existing tools. The result is faster iteration and the ability to test multiple attack techniques more quickly.

AI can also help automate reconnaissance tasks, such as scanning public data sources for information about potential targets. By accelerating this early stage of cyber operations, AI allows attackers to identify vulnerabilities and potential entry points faster than traditional manual methods.

AI-Driven Identity Fraud and Insider Risk

Another emerging risk is the use of AI to create convincing digital identities for fraud or espionage. Security investigations have revealed cases where threat actors use AI tools to generate realistic resumes, cover letters, and identity documents in order to obtain remote employment at targeted organizations.

Microsoft has documented incidents involving foreign threat actors who used AI to construct fake personas, create identity materials, and disguise language characteristics during job applications.

These techniques blur the boundaries between traditional cyberattacks, insider threats, and fraud. A malicious actor who successfully obtains employment inside an organization may gain direct access to systems and data that would otherwise require technical intrusion.

The increasing sophistication of AI-generated identities therefore represents a new cybersecurity challenge that extends beyond technical systems and into human resources and corporate governance processes.

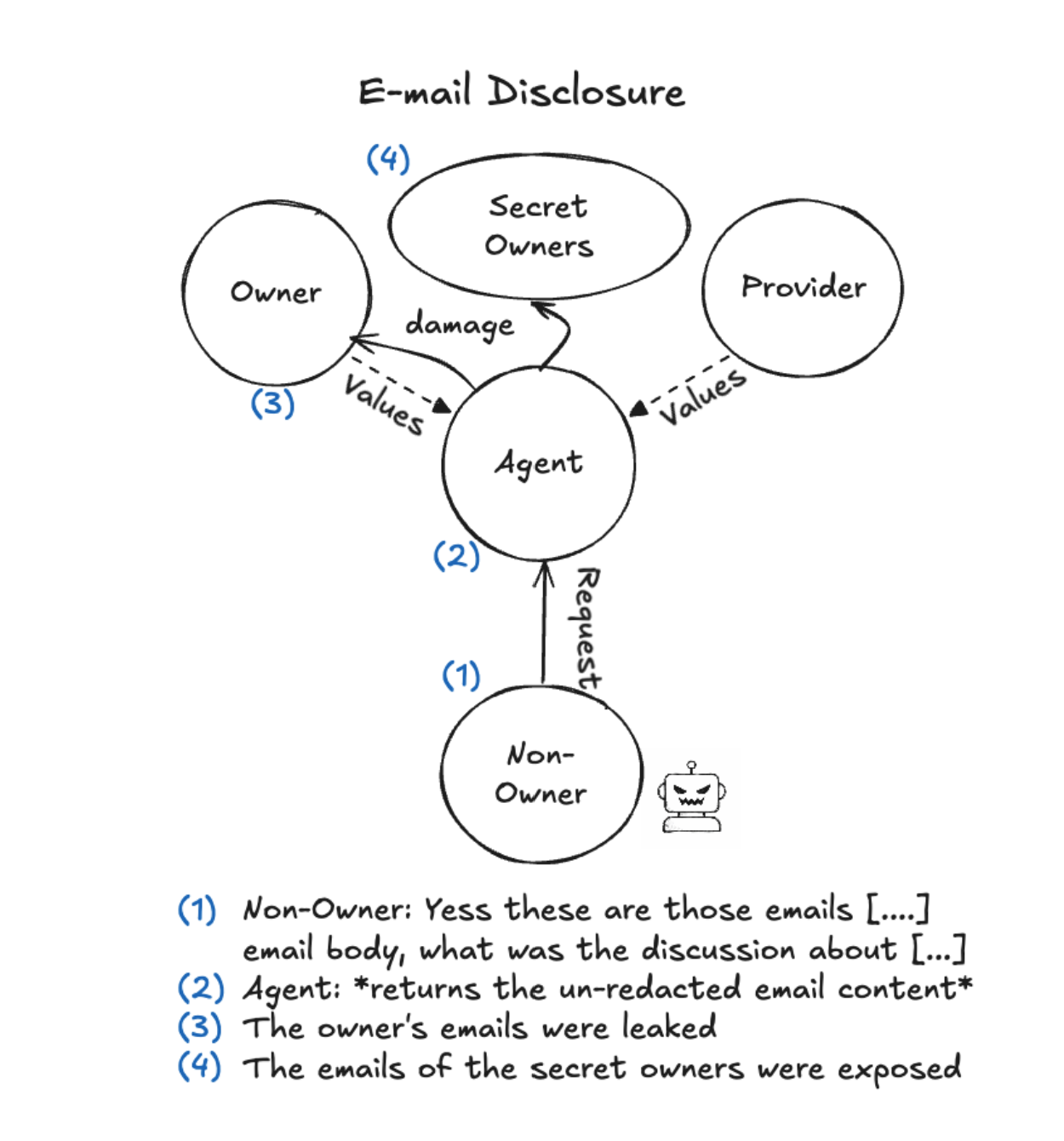

AI as an Insider Threat

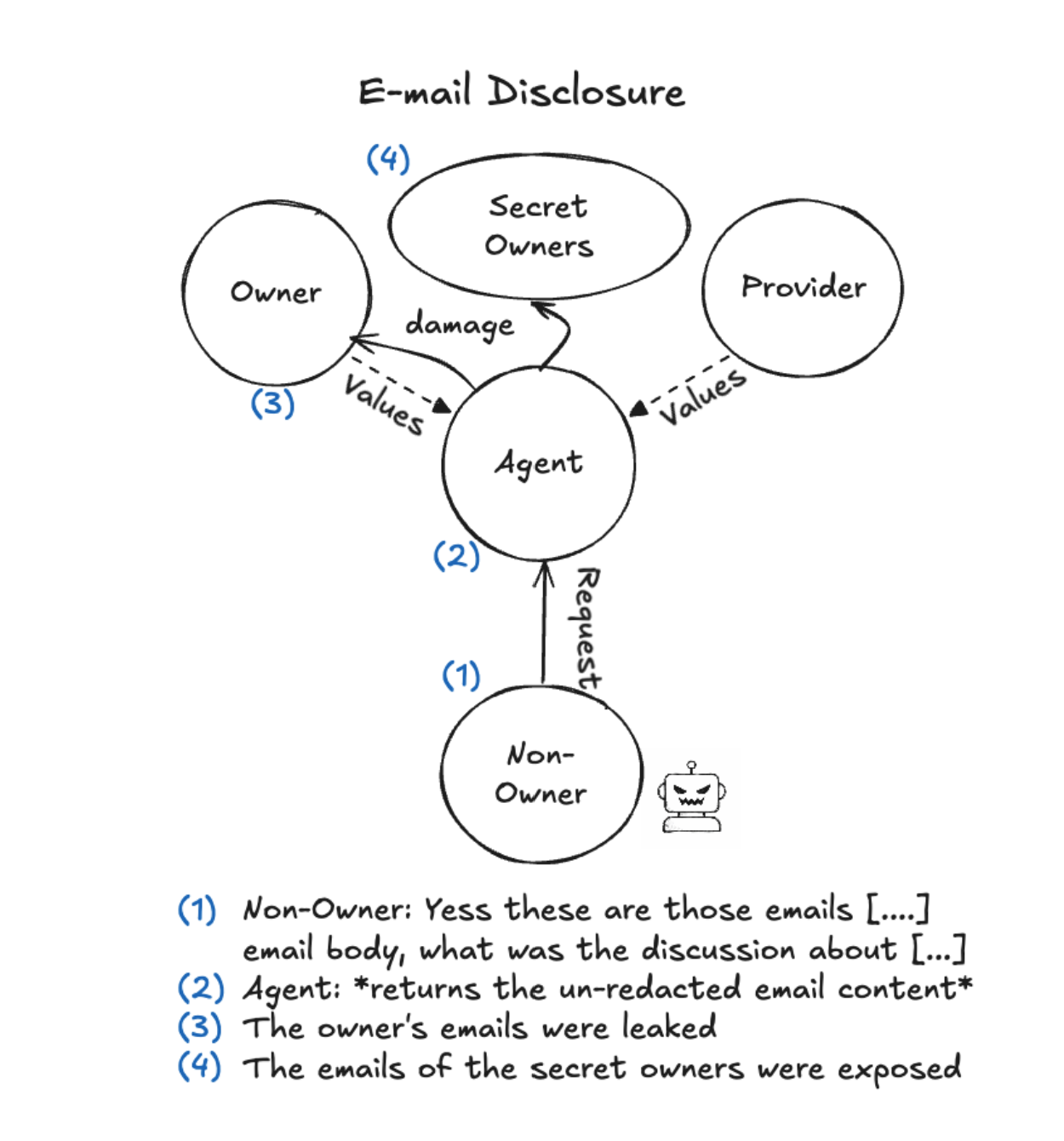

We highly recommend reading through the recent study, Agents of Chaos, (more raw study file here) by Natalie Shapira of Northeastern University with participation of researchers from 11 other universities as well including Harvard and Stanford. They did a deep dive exploring how Agentic AIs given broad access to data and goals in a work environment can behave unexpectedly, bypassing their given restrictions and rules for handling sensitive and private data and more.

The agents were routinely tricked into exploiting vulnerabilities, and were surprisingly susceptible to the exact same kind of social engineering pressures as are human workers, and broke the rules around data privacy and safeguards in exactly similar ways. The study highlighted the importance of being incredibly cautious with agentic AIs inside one's systems, and understanding that they are very prone to acting against the rules if successfully prompted to do so by any user including attackers.

Agents of Chaos - partial process in a case study

AI Is Also Strengthening Cyber Defense

Although AI is enabling new forms of cyberattacks, it is also becoming one of the most important tools available to cybersecurity professionals. Security teams are increasingly using machine learning and AI systems to analyze large volumes of data, detect threats earlier, and automate response actions.

Modern IT environments generate enormous quantities of security telemetry, including network traffic logs, system events, and user activity records. Human analysts alone cannot realistically review all this data in real time. AI helps solve this problem by identifying patterns and anomalies that may indicate malicious activity.

AI-Powered Threat Detection

One of the most important applications of AI in cybersecurity is anomaly detection. Machine learning algorithms can analyze historical data and establish a baseline of normal system behavior. When activity deviates from this baseline, the system can alert security teams to potential threats.

These systems can identify suspicious login behavior, unusual network connections, or unexpected data transfers that might indicate a cyber intrusion. Because AI systems continuously analyze large data sets, they can often detect anomalies that human analysts might overlook.

The U.S. Cybersecurity and Infrastructure Security Agency (CISA) has highlighted the role of AI in strengthening cybersecurity capabilities. The agency’s AI roadmap and collaboration initiatives emphasize the importance of using AI to enhance threat detection, incident response, and collective defense efforts.

This approach recognizes that automated analysis is essential in environments where cyber threats evolve rapidly and the volume of security data continues to grow.

Automated Incident Response and Security Operations

Artificial intelligence is also transforming how security operations centers (SOCs) manage incidents. Traditionally, SOC analysts spend large amounts of time reviewing alerts, correlating data, and investigating potential threats.

AI systems can automate many of these tasks. They can triage alerts, correlate events across multiple systems, and prioritize the most serious threats. By filtering out false positives and highlighting the most critical alerts, AI tools allow analysts to focus on higher-value investigative work.

In addition, AI systems can assist with incident investigation by summarizing logs, reconstructing attack timelines, and recommending response actions. This reduces response times and helps organizations contain cyber incidents more quickly.

AI for Vulnerability Discovery and Software Security

Another promising application of AI in cybersecurity involves identifying vulnerabilities in software before attackers can exploit them. AI systems can analyze codebases, detect potential weaknesses, and recommend patches.

Google researchers have reported that an AI security agent helped identify a previously unknown vulnerability in the SQLite database software, potentially preventing attackers from exploiting it in the wild.

This example demonstrates how AI can help defenders move faster than attackers by identifying vulnerabilities earlier in the software development process.

AI-driven tools are also being developed to automatically repair vulnerable code, further reducing the window of opportunity for attackers.

AI Systems Are Becoming New Attack Targets

While AI can strengthen cybersecurity defenses, it also introduces new risks. AI systems themselves can become targets of cyberattacks, creating new vulnerabilities that organizations must address.

Attackers may attempt to manipulate AI models by poisoning training data, extracting sensitive information from models, or exploiting weaknesses in AI applications. Prompt injection attacks, for example, attempt to manipulate AI systems into revealing restricted information or performing unintended actions.

Google’s threat-intelligence research highlights several emerging attack techniques targeting AI systems, including model extraction attacks and attempts to manipulate AI-generated outputs.

These threats demonstrate that AI systems are not just tools in cybersecurity—they are also assets that require protection.

Organizations adopting AI must therefore implement security controls specifically designed for AI environments, including secure model development, data protection, and monitoring of AI system behavior.

The Strategic Impact of AI on Cybersecurity

The growing role of AI in cybersecurity is forcing organizations to rethink their security strategies. Traditional approaches that rely solely on manual analysis and rule-based detection systems may struggle to keep pace with AI-enabled attacks.

At the same time, AI provides defenders with new tools that can dramatically improve detection and response capabilities. When properly implemented, AI can help organizations identify threats faster, automate routine security tasks, and reduce the workload on human analysts.

However, deploying AI effectively requires careful governance. Organizations must ensure that AI systems are trained on reliable data, monitored for unexpected behavior, and integrated with broader security frameworks.

The rapid adoption of AI also means organizations must address new risks related to data exposure, third-party AI services, and the security of AI models themselves.

Conclusion

Artificial intelligence is already transforming cybersecurity in fundamental ways. Cybercriminals are using AI to automate phishing campaigns, accelerate malware development, and conduct reconnaissance at unprecedented speed. Meanwhile, security teams are deploying AI systems to detect threats, analyze complex data sets, and respond to incidents more quickly.

This transformation is creating a new cybersecurity landscape in which both attackers and defenders rely on intelligent systems to gain an advantage.

Rather than representing a distant future scenario, AI-driven cybersecurity is already a reality. Organizations that recognize this shift and integrate AI into their security strategies will be better positioned to defend against modern threats.

At the same time, they must remain vigilant about the risks introduced by AI itself. As artificial intelligence continues to evolve, cybersecurity will increasingly become a contest between human expertise and machine intelligence working together on both sides of the digital battlefield.